System Architecture

Built on open standards for flexibility, scalability, and best-in-class cost-performance

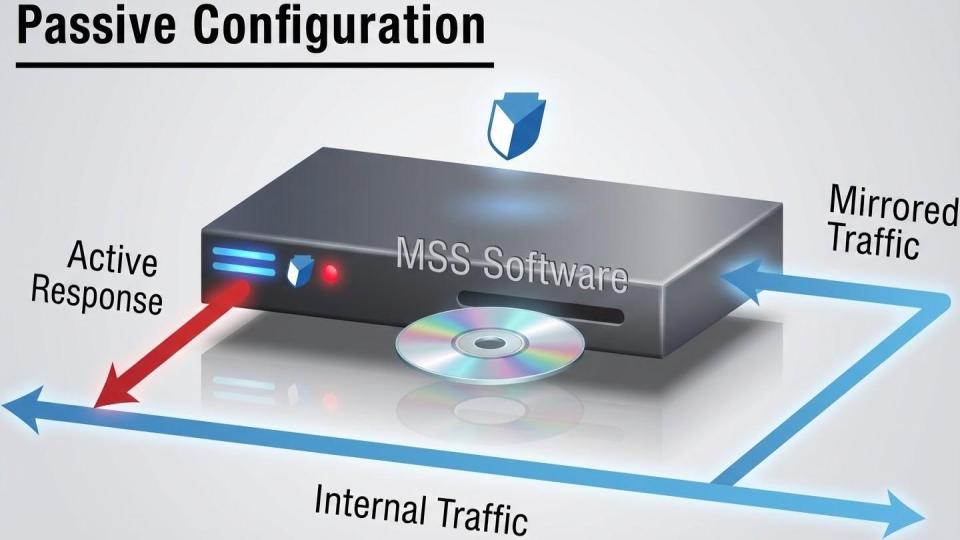

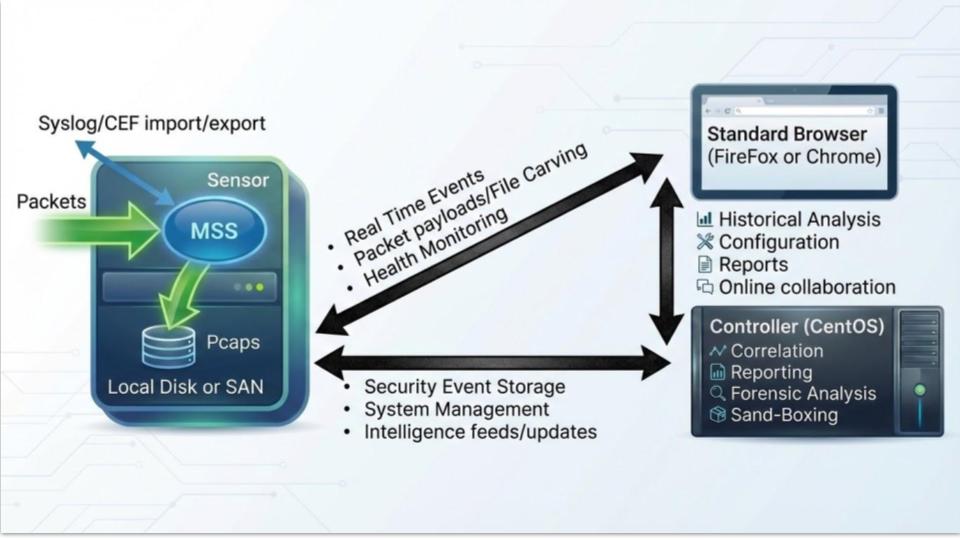

The MetaFlows Security System uses a distributed architecture with two main components: sensors and controllers. A single controller can manage anywhere from 1 to 1,000+ sensors, providing centralized management and analysis at any scale.

Distributed sensor and controller architecture

Sensors

Sensors run on standard Rocky Linux 9 augmented with our multi-functional deep packet inspection software and proprietary kernel drivers. Key features:

- Full root access: Easily augment with site-specific applications or configurations

- Automatic updates: OS updates via standard package management, MetaFlows software self-updates

- Flexible deployment: Install on customer hardware, VMware, or EC2 instances

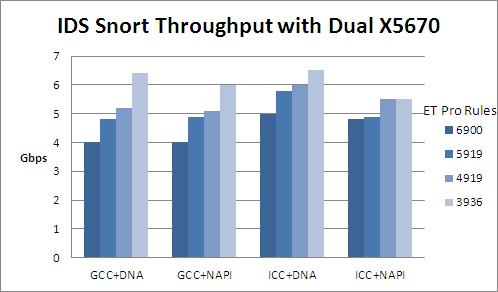

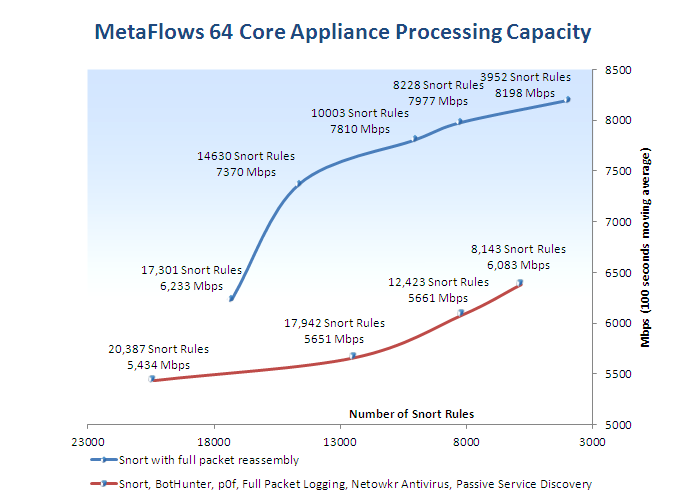

- High performance: Scales to 10 Gbps using PF_RING technology

Controller

The controller provides centralized management and analysis capabilities:

- Web GUI for system management of all sensors

- Receives and stores metadata continuously exported by sensors

- Web-based forensic analysis application

- Automated reports and email alerts

- Security intelligence feed management and distribution

Typical Workflow

Detection

Sensor detects suspicious behavior through multi-functional network traffic analysis

Alert

Metadata and automated incident report sent in real-time to controller and/or SIEM

Notification

Controller triggers email alert or analyst sees event on real-time console

Analysis

Analyst reviews metadata through web interface or SIEM, queries sensor for payload data

Documentation

Analyst files incident report with relevant metadata and payload evidence

Remediation

Instantiate remediation policies through Soft IPS or verify existing protections